Zhao (Joey) Zhang (张 钊)

I'm currently working as a Senior Research Scientist at

Canva

,

with a focus on Image Generation and Multimodal LLMs.

I completed my Master's degree at Nankai University,

where I was under the supervision of

Ming-Ming Cheng.

Please feel free to contact me at

(📮: zzhang🥳mail🔅nankai🔅edu🔅cn)

Canva

,

with a focus on Image Generation and Multimodal LLMs.

I completed my Master's degree at Nankai University,

where I was under the supervision of

Ming-Ming Cheng.

Please feel free to contact me at

(📮: zzhang🥳mail🔅nankai🔅edu🔅cn)

is now live on

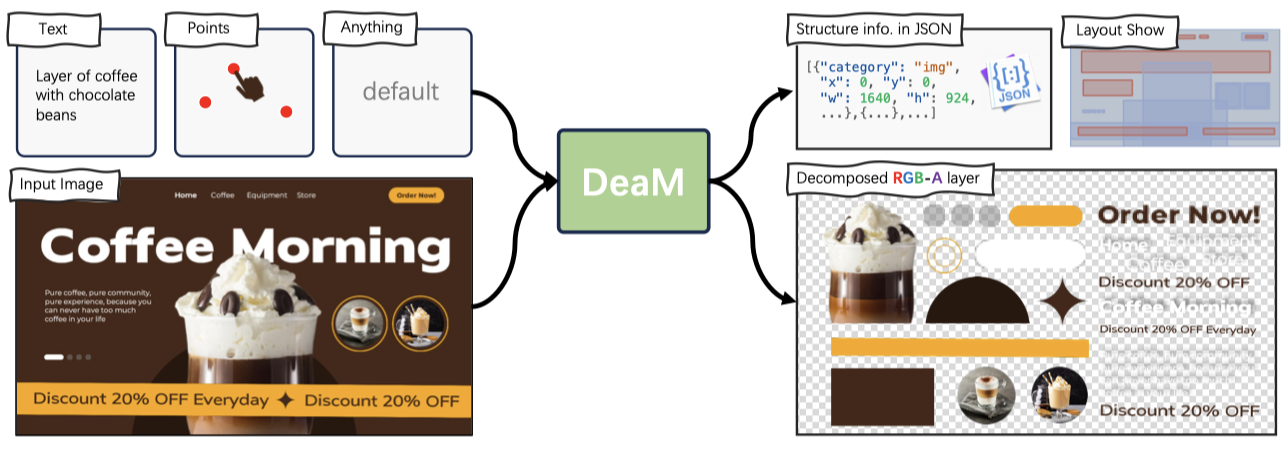

is now live on  , an AI-driven graphic design generation system for multi-layer and editable compositions with strong visual appeal.

, an AI-driven graphic design generation system for multi-layer and editable compositions with strong visual appeal.

was accepted by AAAI 2025. We have unleashed the potential of MLLM in graphic design.

was accepted by AAAI 2025. We have unleashed the potential of MLLM in graphic design.

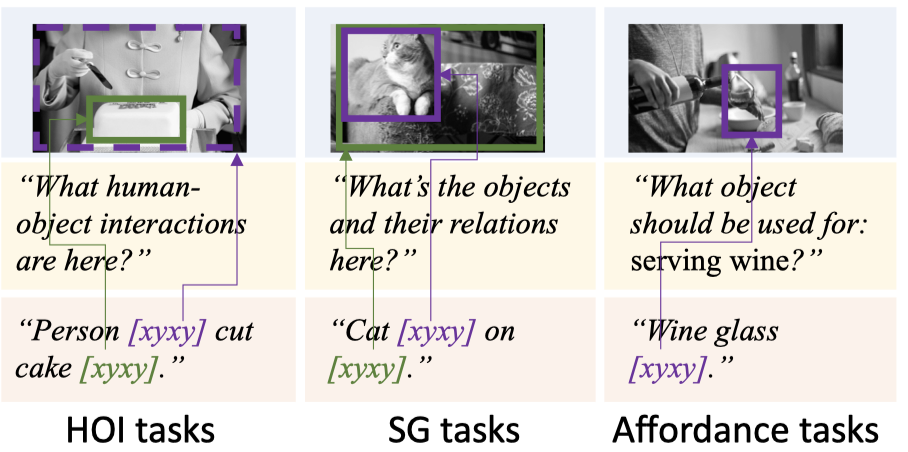

, an awesome MLLM for Referential Dialogue.

, an awesome MLLM for Referential Dialogue.